Google keeps changing its algorithm every now and then to deliver the best and relevant answers to users, and this change really matters to website owners. Constant tweaks in Google’s algorithm persuade webmasters to create better sites, experiences and content. Dedicated SEO services are available to ensure that your business website adapts according to the new Google algorithm changes. Strategic SEO helps increase visibility among target audience, and increase brand recognition and identity.

Broad Core Algorithm Update

Broad core updates are introduced to provide widely noticeable effects across search results in all countries, and in all languages. It could affect the online search visibility of websites. Whenever an update is rolled out, Google re-evaluates the SERP ranking of websites depending on their expertise, authoritativeness, and trustworthiness (E-A-T). With such updates, Google expects to improve the contextual results for search queries and also enhance the content quality.

The first core update of 2020 was launched in the second week of January. The corona virus pandemic drastically changed users’ search behavior. Google even said “There have never been so many searches for a single topic as there have been for COVID-19.” People started looking for businesses offering a range of services and products online. Categories that were once popular before COVID were not searched for as much.

Related blog post

The second core update was in May 2020 and Google was dealing with the unique challenge of catching up with how the world is searching. If you have been hit by this update and negatively impacted, Google suggests that no specific action is required. Google points out, “We know those with sites that experience drops will be looking for a fix, and we want to ensure they don’t try to fix the wrong things. Moreover, there might not be anything to fix at all.” However, Google suggests that evaluating your content, whether it is original, and informative enough with valuable insights, can boost ranking.

Fixing Your Website after the Core Update

As mentioned earlier, there are no particular fixes for the new core update. But there are some viable strategies that can help improve the content so that the traffic and ranking also increase. Make sure to constantly monitor the ranking fluctuations too. It is also important to check the sites that are not ranking well even after providing quality content. It is best to stick with your current content strategy, if you have had good ranking earlier and after the core update algorithm; you can also find new ways to improve your rankings. Apart from generating quality content, Google also advises to focus on white hat SEO methods like influencer outreach using a blogger outreach specialist.

Search quality guidelines and EAT: As many SEOs have already stated in the past, you should refer to the search quality raters’ guidelines, which has moved locations and focus on Expertise, Authoritativeness and Trustworthiness. The guidelines help you to assess how your content is doing from an E-A-T perspective and make necessary improvements accordingly. It’s highly recommended to use white hat techniques such as blogger outreach and broken link building as part of the SEO strategy to get high-quality links. Here are some tips for improving EAT rating:

- Add Author Byline to All Blog Posts

It is important for Google to know the authenticity of the person who is providing information to users. For instance, if your site falls under the YMYL category, and posts content related to healthcare, wellness, and finance sectors, it is important to ensure that the author is someone who is an expert in that field. This is to make sure that trustworthy and certified authors draft the content displayed to users. Getting the content written by content writers is a trend largely seen among YMYL sites. But Google does not prefer content focused only on promotion, but gives weightage to high-quality, trustworthy content. Author byline is a short unique bio of the author for promotional opportunity or creative project. Your bio should be relevant, clear, concise and professional. Begin with your name and occupation and write about yourself as third person. Do not use any fancy jargons or job titles, keep it simple, clean and interesting.

- Eliminate Scraped/Duplicate Content

By analyzing individual posts and pages, Google calculates the E-A-T score of a website. If you’re engaging, scrapping or duplicating content from another website, there is a higher chance for your website to get hit by an algorithm update. Paraphrasing content doesn’t make it unique, and Google can easily identify content that duplicate content to paraphrase it. So, if you feel your website has scrapped or rewritten content, it would be ideal to remove it. Google considers E-A-T as one of the top 3 considerations for page quality and duplicate content can affect your E-A-T score. Although there is no step by step explanation of how the algorithm evaluates sites and queries, following are two of the many ways in which search engines evaluate E-A-T, as pointed out in an e2msolutions.com article:

- TrustRank: TrustRank was first developed as a collaboration between computer scientists at Stanford and Yahoo!, but Google has a patent for a similar algorithm, and modern web search engines are almost certainly employing some variation on the concept.The idea behind TrustRank is as follows:

- It first identifies a set of trustworthy “seed” websites or pages. These sites are evaluated by experts to determine if they are trustworthy.

- TrustRank then flows through the links from such seed sites to other parts of the internet. It gets increasingly diluted as it moves away from the seed sites and gets divided among more links.

- Therefore, pages that are closer to the seed set in the link graph are considered more trustworthy. Pages far away from the seed set are considered less trustworthy.

- Knowledge based trust (KBT): This was developed to evaluate the trustworthiness of web sources. It is likely that this algorithm or another similar one must have been added to the core algorithm. The knowledge-based trust algorithm works as follows:

- Facts are extracted from web pages in the form of subject, predicate, object. There are 16 different types of extractors used to pull these facts from web pages and that they are cross-referenced for accuracy with Freebase.

- A first estimate on the accuracy of any given fact is then found by compiling the popularity of the fact amongst various websites and the consistency of the facts amongst the various extraction methods.

- Based on how accurate its facts are, each source is awarded a trustworthiness score using an iterative process. This trustworthiness score is used to revalue how accurate the facts are, which is then fed back into the algorithm again.

- This process continues until the trustworthiness score of each site converges to a constant value.

If your web pages state facts that are stated by other trustworthy sites as well, then your pages would be considered trustworthy.

- Focus on Personal Branding

Include an “About Us” page on your website that provides valuable inputs to Google in the form of schema mark-up. Positive testimonials and customer reviews, both within the site and outside, can also boost the trustworthiness of your website. Google’s quality rater guidelines also ask webmasters to display the contact information and customer support details for YMYL sites.

Consistently communicating your brand message can be done in verbal interactions or on your CV, on your institution’s faculty pages, on your own social media bios, on platforms like Doximity and ResearchGate, and even on your own website.

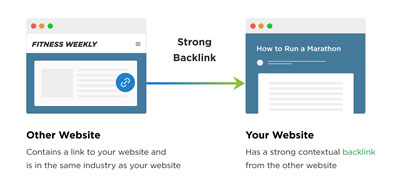

- Focus on the Quality of Back Links Than Quantity

Google’s search quality guidelines suggest that websites with high-quality backlinks have a good E-A-T score. So, if you focus on building low-quality links through PBNs and blog comments, it may also invite Google’s wrath and affect your site’s E-A-T rating. The following are the different types of backlinks that are valuable:

- Links that come from trusted and authoritative websites

- The link’s anchor text includes target keywords

- The site linking to you is topically related to your website

Image source: www.backlinko.com

- The link is a “Dofollow” link

Latest Core Update

The latest core update was rolled out on December 3, 2020. Like all core updates, this was a global update which was not specific to any region or language or category of web sites. It was a classic broad core update just as those that Google releases every few months or so, and this was the longest stretch since a confirmed broad core update.

For better ranking and positive results, website owners have to keep a keen watch on the changing trends. If you don’t keep up with the changes, your web pages could experience significant drop in their ranking, and websites trying spammy techniques will be penalized by Google. For better ranking in search results, it is important to conduct SEO activities regularly. A reliable SEO service keeps a firm watch on current market trends, develops strategies and planning according to the present requirements, and helps in improving your ranking and traffic even with new and frequent algorithm changes.